Computer Simulation for Game Design

Faster iterations.

Better games.

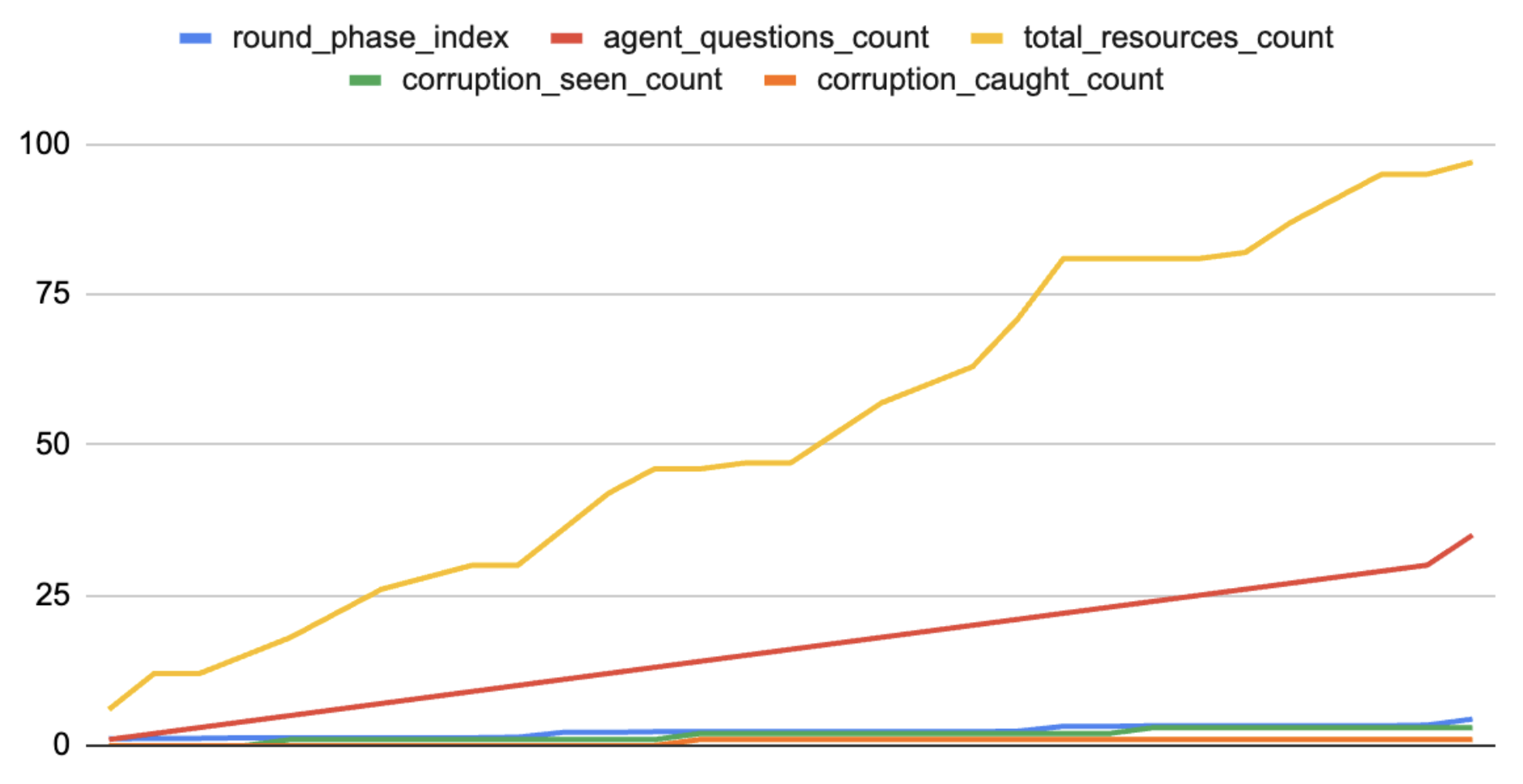

Good game design is iterative. The loop of prototype → playtest → evaluate → design is where games get better — but most of it moves at human speed. I build compute simulation systems that handle the mechanical parts of that loop computationally, so designers can spend more time where it matters most: designing.

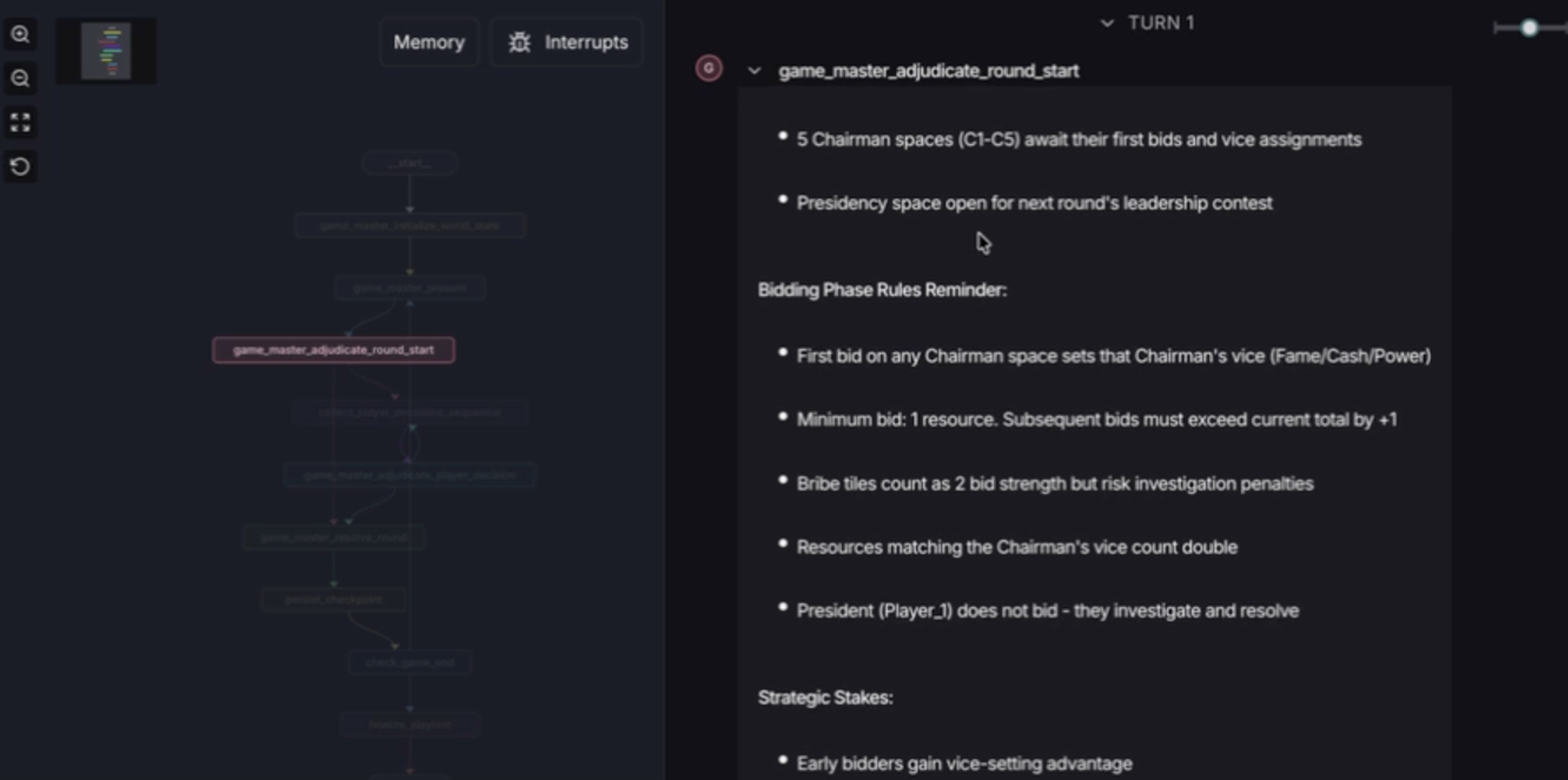

Live simulation run — Stepping through a full game of The Beautiful Bid in real time